I currently had a functional v3 build of Docker Redash.

To get the latest v5 I stopped all my docker instances and did

docker-compose run --rm server manage db upgrade

When I start the docker compose I get this stacktrace

worker_1 | [2018-09-27 03:15:14,806][PID:1][ERROR][MainProcess] Task redash.tasks.refresh_queries[d1658aea-325c-43f2-8bbc-55d57dc09760] raised unexpected: ProgrammingError('(psycopg2.ProgrammingError) column queries.search_vector does not exist\nLINE 1: ...ule_failures, queries.options AS queries_options, queries.se...\n ^\n',)

worker_1 | Traceback (most recent call last):

worker_1 | File "/usr/local/lib/python2.7/dist-packages/celery/app/trace.py", line 240, in trace_task

worker_1 | R = retval = fun(*args, **kwargs)

worker_1 | File "/app/redash/worker.py", line 71, in __call__

worker_1 | return TaskBase.__call__(self, *args, **kwargs)

worker_1 | File "/usr/local/lib/python2.7/dist-packages/celery/app/trace.py", line 438, in __protected_call__

worker_1 | return self.run(*args, **kwargs)

worker_1 | File "/app/redash/tasks/queries.py", line 275, in refresh_queries

worker_1 | for query in models.Query.outdated_queries():

worker_1 | File "/app/redash/models.py", line 1010, in outdated_queries

worker_1 | for query in queries:

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/orm/query.py", line 2925, in __iter__

worker_1 | return self._execute_and_instances(context)

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/orm/query.py", line 2948, in _execute_and_instances

worker_1 | result = conn.execute(querycontext.statement, self._params)

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/engine/base.py", line 948, in execute

worker_1 | return meth(self, multiparams, params)

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/sql/elements.py", line 269, in _execute_on_connection

worker_1 | return connection._execute_clauseelement(self, multiparams, params)

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/engine/base.py", line 1060, in _execute_clauseelement

worker_1 | compiled_sql, distilled_params

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/engine/base.py", line 1200, in _execute_context

worker_1 | context)

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/engine/base.py", line 1413, in _handle_dbapi_exception

worker_1 | exc_info

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/util/compat.py", line 203, in raise_from_cause

worker_1 | reraise(type(exception), exception, tb=exc_tb, cause=cause)

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/engine/base.py", line 1193, in _execute_context

worker_1 | context)

worker_1 | File "/usr/local/lib/python2.7/dist-packages/sqlalchemy/engine/default.py", line 507, in do_execute

worker_1 | cursor.execute(statement, parameters)

worker_1 | ProgrammingError: (psycopg2.ProgrammingError) column queries.search_vector does not exist

worker_1 | LINE 1: ...ule_failures, queries.options AS queries_options, queries.se...

worker_1 | ^

worker_1 | [SQL: 'SELECT queries.query AS queries_query, queries.updated_at AS queries_updated_at, queries.created_at AS queries_created_at, queries.id AS queries_id, queries.version AS queries_version, queries.org_id AS queries_org_id, queries.data_source_id AS queries_data_source_id, queries.latest_query_data_id AS queries_latest_query_data_id, queries.name AS queries_name, queries.description AS queries_description, queries.query_hash AS queries_query_hash, queries.api_key AS queries_api_key, queries.user_id AS queries_user_id, queries.last_modified_by_id AS queries_last_modified_by_id, queries.is_archived AS queries_is_archived, queries.is_draft AS queries_is_draft, queries.schedule AS queries_schedule, queries.schedule_failures AS queries_schedule_failures, queries.options AS queries_options, queries.search_vector AS queries_search_vector, queries.tags AS queries_tags, query_results_1.id AS query_results_1_id, query_results_1.retrieved_at AS query_results_1_retrieved_at \nFROM queries LEFT OUTER JOIN query_results AS query_results_1 ON query_results_1.id = queries.latest_query_data_id \nWHERE queries.schedule IS NOT NULL ORDER BY queries.id'] (Background on this error at: http://sqlalche.me/e/f405)

worker_1 | [2018-09-27 03:15:14,814][PID:1][INFO][MainProcess] Task redash.tasks.cleanup_tasks[5048af86-525d-4c1c-85af-18d87f7ed370] succeeded in 0.00293156299995s: None

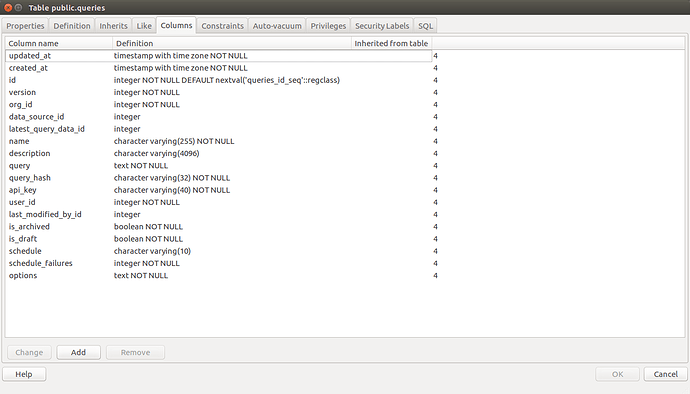

The Queries Table